Bridging the Gap in Conversational Agents: Discover MIBURI's Expressive Gesture Synthesis!

The world of technology is evolving, and so are our interactions with machines. Researchers at the Max Planck Institute for Informatics and Saarland University have taken a significant leap forward with their innovative framework, MIBURI. This cutting-edge system addresses the limitations of current conversational agents by creating expressive gestures and facial expressions in real-time, paving the way for more natural and engaging human-computer interactions.

The Need for Embodied Communication

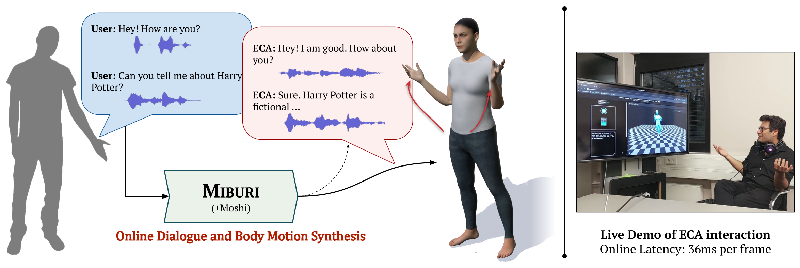

Embodied Conversational Agents (ECAs) aim to imitate face-to-face human interactions—not just through spoken words but also through gestures and facial expressions. However, traditional conversational agents, often powered by large language models (LLMs), struggle to deliver engaging non-verbal communication. Their gestures tend to be rigid and lack diversity, which falls short of human-like interaction. MIBURI seeks to fill this gap by synchronizing full-body gestures with real-time dialogue, creating a more immersive communication experience.

How MIBURI Works

At the heart of MIBURI is a two-dimensional causal framework employed to generate a hierarchy of gestures. During a conversation, this system efficiently interprets speech and generates body movements that reflect the mood and context of the dialogue. Unlike previous models requiring both past and future speech data, MIBURI operates in real-time by using only past inputs for its predictions, allowing for seamless communication that mimics human dynamics.

Innovative Features of MIBURI

MIBURI employs advanced body-part aware gesture codecs that break down movements into discrete tokens. This architecture ensures that gestures are not only expressive but also diverse, preventing the system from falling into a pattern of monotonous movements. By utilizing a speech-based LLM token stream, MIBURI achieves a strike balance between generating natural gestures and maintaining low latency—critical for real-time applications.

Impressive Results

Comparative evaluations show that MIBURI outperforms existing benchmarks, delivering contextually appropriate and visually engaging gestures. In user studies, participants significantly preferred MIBURI-generated gestures over those produced by traditional systems, confirming its potential in revolutionizing human-robot or human-computer interactions.

The Future of Interactive Agents

As we move towards a future where digital interactions are more human-like, MIBURI stands out as a beacon of innovation. This framework not only exemplifies the technological advancements in gesture synthesis but also encourages further exploration into integrating body dynamics in conversational agents. As researchers strive to make ECAs more responsive and engaging, MIBURI's success lays the groundwork for the next generation of naturally interactive systems.

For a firsthand experience, visit the project's demo page and witness the magic of MIBURI in action!

Authors: {M. Hamza Mughal, Rishabh Dabral, Vera Demberg, Christian Theobalt}