Revolutionizing Urban Safety: How URBANCLIPATLAS Transforms Long-Duration Video Analysis

The urban landscape is continually evolving, making it essential to understand the dynamics at play, especially at critical locations like street intersections. A team of researchers has developed a groundbreaking visual analytics framework, URBANCLIPATLAS, that simplifies the retrieval and interpretation of events from extensive urban video footage.

Understanding the Challenge

Urban planners and transportation engineers face the daunting task of extracting meaningful insights from hours of recorded footage to enhance safety and mobility in cities. Traditional methods require painstaking manual analysis, often leading to missed critical moments. URBANCLIPATLAS aims to change that narrative by automatically segmenting long videos into manageable clips and providing context-rich insights.

Introducing URBANCLIPATLAS

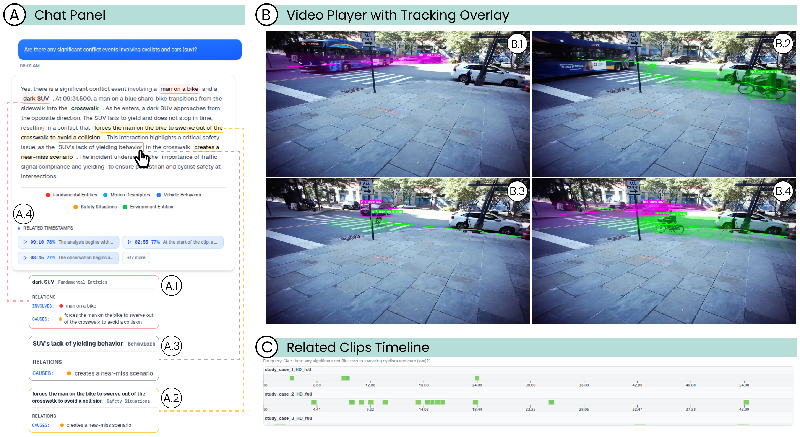

This new system leverages advanced technologies like retrieval-augmented generation (RAG) and vision-language models (VLMs) to identify, extract, and explain urban events. By creating a structured approach, analysts can access video segments related to specific queries without rummaging through hours of footage.

URBANCLIPATLAS utilizes a combination of entity extraction and video grounding techniques to ensure that analysts receive enhanced visual evidence along with textual explanations, creating a cohesive understanding of urban events.

How It Works

At the heart of URBANCLIPATLAS is its three-stage workflow:

- Preprocessing: Long videos are segmented into short clips and described using a language model, allowing for efficient indexing and retrieval.

- Augmented Narrative Generation: When a user poses a question, the system enriches the query to match indexed descriptions and generates narrative answers that highlight the relevant events.

- Visualization: An interactive visual interface enables users to explore the retrieved video segments alongside narrative explanations and entity-level structures.

Case Studies Highlighting Effectiveness

Two case studies using the StreetAware dataset illustrate URBANCLIPATLAS's capabilities. The first study focused on identifying conflict events between cyclists and vehicles, where the system successfully retrieved relevant clips and detailed their interactions. The second study showcased how the platform could identify risks associated with large vehicles obstructing pedestrian crossings, emphasizing its potential in enhancing public safety.

Acknowledging Limitations and Future Directions

While URBANCLIPATLAS presents an innovative solution for urban video analytics, there are inherent challenges in ensuring reliable entity identification and grounding against visual evidence. Moving forward, the team plans to scale the system and improve its robustness by refining its grounding methods and expanding its application to different urban environments.

In conclusion, URBANCLIPATLAS not only streamlines the process of analyzing urban videos but also augments the capabilities of urban planners and transportation engineers, empowering them to foster safer and more efficient urban environments.

Authors: {'Juanpablo Heredia': 'Juan1t0_H'}