Unraveling AI Collaboration: How Game Theory Supercharges LLM Cooperation

In a world where AI-driven language models are becoming increasingly integral to our lives, the ability of these models to engage in cooperative interactions is essential. A recent research paper titled "fCoopEval: Benchmarking Cooperation-Sustaining Mechanisms and LLM Agents in Social Dilemmas" seeks to illuminate this crucial facet of AI operation, unveiling the strengths and weaknesses of large language models (LLMs) in contexts that demand teamwork.

Understanding Social Dilemmas

At the core of this research lies the concept of social dilemmas, scenarios in which individual interests conflict with collective benefits. The researchers explored scenarios like the Prisoner's Dilemma and the Trust Game, determining how these models behave when faced with choices that can either benefit themselves or the collective group. Surprisingly, many modern LLMs tend to "defect" or prioritize individual gain over group welfare, displaying a concerning trend as their reasoning capabilities improve.

Mechanisms for Cooperation

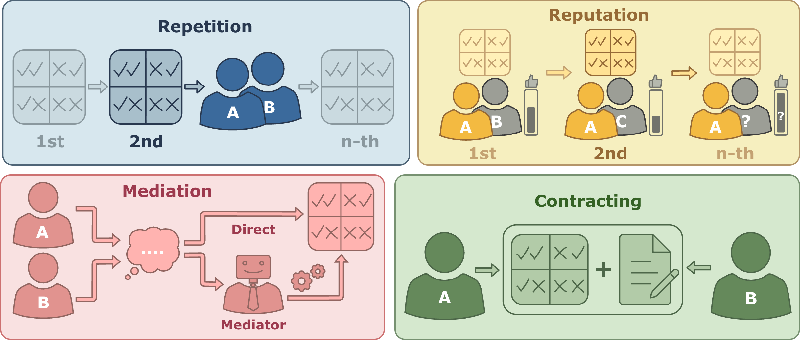

The study goes beyond merely highlighting problems, proposing innovative mechanisms to foster cooperation among rational agents. Four distinct mechanisms were tested:

- Repetition: Players engage in multiple rounds, providing opportunities for mutual cooperation based on past actions.

- Reputation: Players are paired with new opponents each round, using past interactions to gauge trustworthiness.

- Mediation: A third-party mediator is introduced to assist in decision-making, potentially helping players align their strategies.

- Contract: Players agree on compensation arrangements that facilitate cooperative behavior.

Key Discoveries

The findings were both revealing and alarming. The study established that while many LLM models deflected in the absence of cooperation mechanisms, those models that deployed contracting and mediation strategies saw significantly improved cooperative outcomes. In fact, contracting was found to recover up to 80% of collective welfare, a stark contrast to systems without these interventions.

Moreover, the study demonstrated that evolutionary pressures, where cooperation mechanisms were enacted, led to an increase in collaborative behaviors among the LLMs, achieving up to 90% cooperation in varied scenarios. This suggests that when LLMs are under pressure to optimize their own payoffs, the presence of supportive frameworks can lead to mutually beneficial interactions.

Implications for Future AI Design

This research does not just present a series of experiments; it lays the groundwork for developing more sophisticated AI systems capable of collaborating in complex environments. As AI systems become more prevalent in industries like finance, healthcare, and diplomacy, the lessons learned from fCoopEval will be essential in designing LLMs that can effectively navigate mixed-motive situations.

In conclusion, the benchmark laid out by this research serves as both a warning and a guide, highlighting the potential risks of modern AI interactions while also showcasing clear pathways toward achieving more cooperative and ethical AI agents. The future of AI may depend not just on improving their reasoning capabilities, but equally on nurturing their ability to work together for the greater good.