Unraveling the Social Media Maze: How Behavioral Change Signals Manipulation

In an age where social media shapes public opinion and influences behaviors, the fight against online manipulation has never been more critical. A recent study by Isuru Ariyarathne and colleagues from William & Mary and Indiana University has uncovered the potential of behavioral changes in social media accounts as a crucial signal for identifying deceptive practices. Their groundbreaking research provides a fresh perspective on how we can better detect automated accounts and coordinated misinformation campaigns.

The Core Idea: Behavioral Changes as a Detection Tool

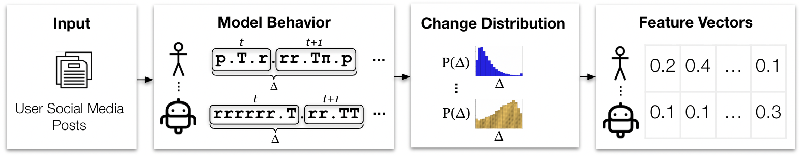

The main thrust of this research centers on the premise that social media accounts engaging in manipulative tactics often change their behavior to avoid detection. To tap into this phenomenon, the researchers adopted a method known as Behavioral Languages for Online Characterization (BLOC). This system encodes an account’s actions and content as strings of symbols, which allows for a new way of examining how account behavior evolves over time.

By analyzing the sequences created using BLOC, the researchers identified patterns in how different types of accounts—whether authentic users, social bots, or coordinated misinformation campaigns—exhibit behavioral change. This study suggests that not all changes should be viewed through the same lens: while authentic users may have stable yet evolving behaviors, automated accounts might show either minimal or erratic behavioral shifts.

Methodology: Analyzing Behavioral Patterns

The researchers segmented the BLOC strings representing user behavior into smaller parts to observe changes over time. Their analysis was twofold: they compared consecutive segments for short-term behavior changes and cumulative segments for longer-term patterns. Two distance measures—cosine distance and normalized compression distance—were employed to quantify these behavioral differences.

Findings from their analysis revealed a striking truth: genuine accounts tend to show consistent behavioral changes while social bots display either very low or high variability in their behaviors. In the case of coordinated inauthentic accounts, they tend to align their behavioral patterns closely within the same campaign but diverge significantly across different campaigns, indicating a level of strategic operation.

Real-World Applications: Enhancing Detection Mechanisms

The implications of this research are profound for the future of social media integrity. By leveraging behavioral change as a detection mechanism, the researchers propose potential implementations in tools designed to identify bots and coordinated disinformation campaigns. These classifiers were shown to maintain a high level of accuracy in differentiating between authentic and inauthentic behaviors, posing an essential advantage over traditional detection systems that often rely on fixed features like content or user metadata.

Moreover, the study opens the door for more adaptable detection methodologies that can evolve alongside the strategies employed by malicious actors, thereby ensuring a more robust approach to combating online manipulation.

Conclusion: A Call to Action

As the digital landscape continues to evolve, so too must our strategies for identifying and mitigating threats. The insights from Ariyarathne and colleagues illustrate that focusing on behavioral changes can significantly enhance our ability to detect sociotechnical threats on social media. Policymakers, platform developers, and researchers alike should consider integrating these findings into their frameworks to empower users and maintain the integrity of online discourse.

For those interested in diving deeper into these methodologies, the authors have made their code available to encourage further research and application in this critical area of social media ethics and safety.

Authors: {Isuru Ariyarathne, Gangani Ariyarathne, Alessandro Flammini, Filippo Menczer, Alexander C. Nwala}